CBS News Live

CBS News Texas: Local News, Weather & More

Watch CBS News

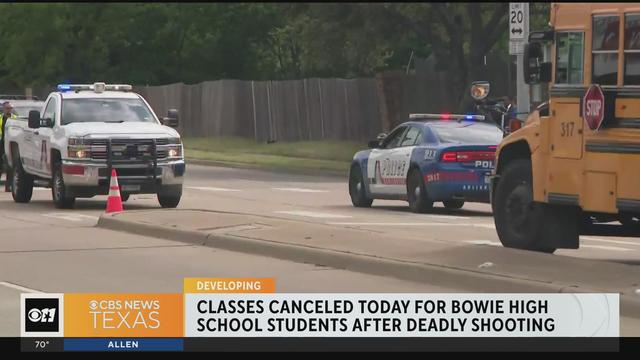

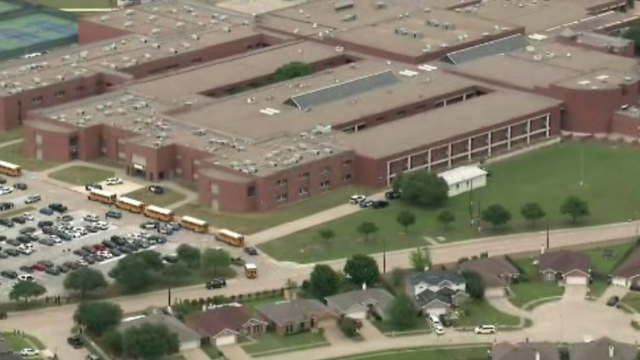

Classes at James Bowie High School are canceled for Thursday.

The "all in" comment has been mis-interpreted by some fans and media members, who became critical of Jones for not being "all in" on the free agent market this spring.

If you're feeling lucky, the overall odds of winning any prize in the game are one in 3.36.

Harvey Weinstein's 2020 conviction on felony sex crime charges has been overturned by the State of New York Court of Appeals.

Noah Hanifin broke a tie with an unassisted goal late in the second period and the Stanley Cup champion Vegas Golden Knights beat the top-seeded Dallas Stars 3-1 on Wednesday night to take a 2-0 lead in the first-round series.

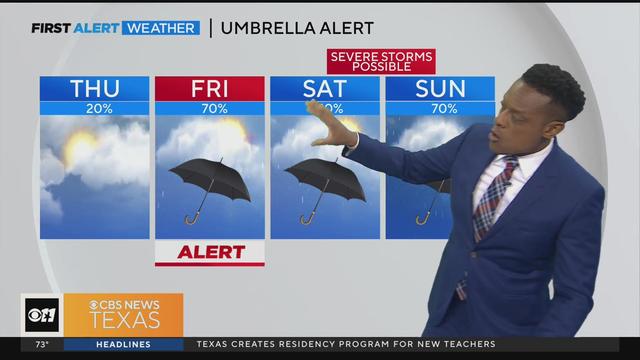

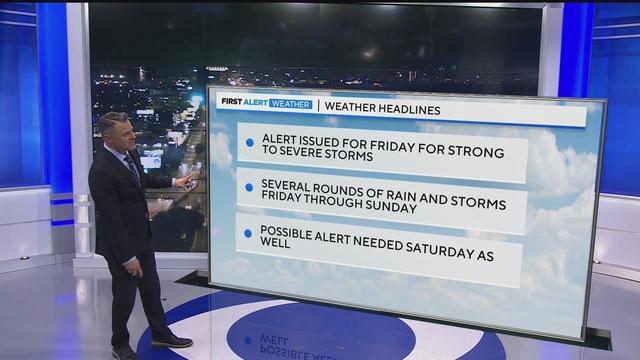

A few isolated showers and storms are possible, but most areas will remain dry for much of today.

We've spent long hours filling up our Big Green NFL Draft Scouting Notebook searching for the perfect draft prospects that can propel the Dallas Cowboys to greener pastures.

CDC's provisional figures show a 2% decline in births from 2022 to 2023.

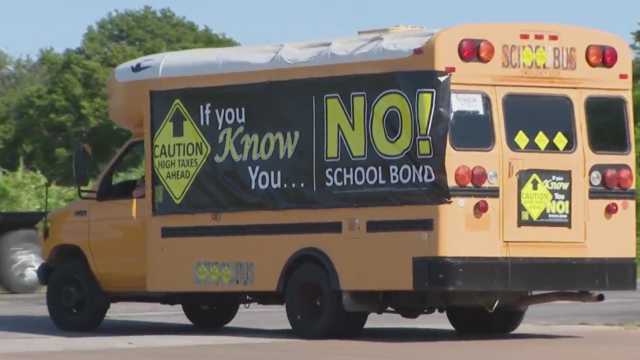

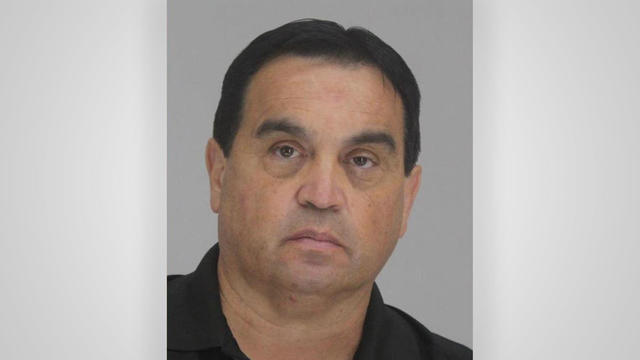

An already heated campaign over an upcoming school bond referendum now involves the chairman of the Hood County Republican party being arrested and facing a felony charge.

"If we were to lose control of the Corporation and its assets it would allow the Defendants to remove us from our home, as they have already threatened to do," the Rev. Mother wrote. "I pray they be stopped."

Don Steven McDougal, a family friend, was indicted by a Polk County grand jury in connection with the death of an 11-year-old girl.

Bois D'Arc Lake is now open to families, boaters and fishers just an hour northeast of DFW in Fannin County.

Classes have been canceled at Arlington's Bowie High School after a shooting. Police arrested a 17-year-old who they've charged with murder in the death of 18-year-old Etavian Barnes.

School programs are headed a different and healthier direction. The U.S. Department of Agriculture announced new meal standards will limit added sugar starting in the 2025-2026 school year. Sodium content will also be limited starting in the 2027-2028 school year.

The fertility rate in the U.S. has been trending downward for decades and now a new report shows it ranks the lowest it has been in a century. According to data from the CDC's National Center for Health Statistics, between 2022 and 2023, there was a 3% drop in the number of births.

Buffalo Bills safety Damar Hamlin suffered a cardiac arrest in the middle of the game back in 2023. His cardiac arrest on the NFL field is helping a national TV audience see the importance of access to the defibrillators and knowing how to perform CPR.

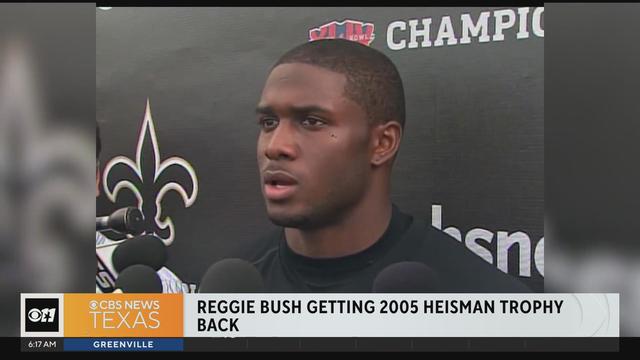

Reggie Bush is getting his Heisman trophy back. The Super Bowl Champion and star running back for USC was stripped of college football's highest honor in 2010. But now, student-athletes can receive compensation for their name, image and likeness.

A few isolated showers and storms are possible, but most areas will remain dry for much of today.

A few isolated showers and storms are possible Thursday, but most areas will remain dry for much of this day.

Isolated showers will be possible Thursday in North Texas but the CBS News Texas weather team expects the cap to remain in place.

A scammer a North Texas woman met on Instagram claimed to be a German cardiologist, and for months, the two messaged back and forth, building what she thought was a true relationship.

Texas police departments have the discretion to determine the frequency and extent of additional driving training for their officers. While some require driving training yearly or every other year, others do not.

Some departments opt to melt the firearms down, while others choose to crush them. However, there are instances where firearms, or at least parts of them, escape destruction altogether.

Several police departments told the CBS News Texas I-Team they were unaware of this practice, even though it was stated in the contracts they signed with the company, Gulf Coast GunBusters.

It's a complicated process that not everyone qualifies for.

Noah Hanifin broke a tie with an unassisted goal late in the second period and the Stanley Cup champion Vegas Golden Knights beat the top-seeded Dallas Stars 3-1 on Wednesday night to take a 2-0 lead in the first-round series.

The "all in" comment has been mis-interpreted by some fans and media members, who became critical of Jones for not being "all in" on the free agent market this spring.

We've spent long hours filling up our Big Green NFL Draft Scouting Notebook searching for the perfect draft prospects that can propel the Dallas Cowboys to greener pastures.

The WNBA team will stay in Arlington for two more years before relocating to the Kay Bailey Hutchison Convention Center.

Luka Doncic scored 32 points and the Dallas Mavericks overcame the return of Clippers superstar Kawhi Leonard to beat Los Angeles 96-93 and tie their Western Conference first-round playoff series at a game apiece.

Classes at James Bowie High School are canceled for Thursday.

The "all in" comment has been mis-interpreted by some fans and media members, who became critical of Jones for not being "all in" on the free agent market this spring.

If you're feeling lucky, the overall odds of winning any prize in the game are one in 3.36.

Harvey Weinstein's 2020 conviction on felony sex crime charges has been overturned by the State of New York Court of Appeals.

Noah Hanifin broke a tie with an unassisted goal late in the second period and the Stanley Cup champion Vegas Golden Knights beat the top-seeded Dallas Stars 3-1 on Wednesday night to take a 2-0 lead in the first-round series.

A scammer a North Texas woman met on Instagram claimed to be a German cardiologist, and for months, the two messaged back and forth, building what she thought was a true relationship.

They found him guilty – now four jurors are explaining how they were convinced to convict Dr. Raynaldo Ortiz.

Texas police departments have the discretion to determine the frequency and extent of additional driving training for their officers. While some require driving training yearly or every other year, others do not.

Some departments opt to melt the firearms down, while others choose to crush them. However, there are instances where firearms, or at least parts of them, escape destruction altogether.

Several police departments told the CBS News Texas I-Team they were unaware of this practice, even though it was stated in the contracts they signed with the company, Gulf Coast GunBusters.

A Texas grand jury indicted more than 140 migrants on misdemeanor rioting charges over an alleged mass attempt to breach the U.S.-Mexico border, a day after a judge threw out the cases.

Regulators prohibit new noncompetes, which impede millions of U.S. workers from getting a better job.

Starting in September of 2024, the year-long residency will allow new teachers to work with veteran teachers before taking on their own classroom.

President Biden signed a foreign aid package into law that includes a potential ban on TikTok in the U.S. Here's what experts say could happen next.

The bond includes upgrades to city streets, parks and public safety facilities. The largest single ticket item in this year's bond includes $50 million for the newly proposed Dallas Police Department's new training facility.

Self-driving 18-wheelers have longtime truckers worried about their livelihood and others concerned that the technology needs more testing to make sure the public is safe.

McDonald's concept restaurant CosMc's has taken its drink-focused menu to Dallas for its second-ever location.

With the country on the cusp of greeting the return of spring, a warm-weather treat is once again available for free for a limited time only.

Kelli and Michael Regan were looking for a new dog. The breeder they found online asked them to pay with gift cards.

Target, looking for ways to add sales, is relaunching its Target Circle loyalty program including a new paid membership with unlimited free same-day delivery in as little as an hour for orders over $35.

The CDC estimates the U.S. could reach 300 measles cases in 2024 — more than the recent peak two years ago.

Organic option is best when buying certain produce, especially blueberries, nonprofit group says in analysis of chemical residues.

The $872 million most likely excludes any amount UnitedHealth may have paid to hackers in ransom.

More than 20 people have been stricken after getting fake or mishandled injections in homes and spas, feds warn.

George Schappell and sister Lori, of Reading, Pa., were the world's oldest conjoined twins, according to the Guinness Book of World Records.

Within hours of the vote, the U.S. Chamber of Commerce announced it would sue to block the ban. Dallas employment attorney Rogge Dunn predicts employees will ultimately win this battle.

The closure affects both Dom's locations in Chicago, and all 33 Foxtrot stores in Chicago, Texas, and the Washington D.C. area.

Texas law SB 14 prohibits drug and surgical "gender transition" interventions for minors.

The projects are expected to create at least 17,000 construction jobs and 4,500 manufacturing jobs.

After more than 40 years in business, 99 Cents Only Stores, a discount chain, announced on Thursday that it will close all 371 of its locations and cease operations.

Noah Hanifin broke a tie with an unassisted goal late in the second period and the Stanley Cup champion Vegas Golden Knights beat the top-seeded Dallas Stars 3-1 on Wednesday night to take a 2-0 lead in the first-round series.

The "all in" comment has been mis-interpreted by some fans and media members, who became critical of Jones for not being "all in" on the free agent market this spring.

We've spent long hours filling up our Big Green NFL Draft Scouting Notebook searching for the perfect draft prospects that can propel the Dallas Cowboys to greener pastures.

The WNBA team will stay in Arlington for two more years before relocating to the Kay Bailey Hutchison Convention Center.

Luka Doncic scored 32 points and the Dallas Mavericks overcame the return of Clippers superstar Kawhi Leonard to beat Los Angeles 96-93 and tie their Western Conference first-round playoff series at a game apiece.

Mary J. Blige, Cher, Foreigner, A Tribe Called Quest, Kool & The Gang, Ozzy Osbourne, Dave Matthews Band and Peter Frampton have been named to the Rock & Roll Hall of Fame.

Taylor Swift broke her own records, Spotify said, and now owns the record for the top three most-streamed albums in a single day.

The singer was found deceased at her home, a representative said.

Anticipation was growing at a fever pitch before Taylor Swift's latest album, "The Tortured Poets Department," dropped at midnight EDT. But it turned out it's actually a double album.

The singers first dated in 2003 and delighted fans when they rekindled their relationship in 2023.

Classes have been canceled at Arlington's Bowie High School after a shooting. Police arrested a 17-year-old who they've charged with murder in the death of 18-year-old Etavian Barnes.

School programs are headed a different and healthier direction. The U.S. Department of Agriculture announced new meal standards will limit added sugar starting in the 2025-2026 school year. Sodium content will also be limited starting in the 2027-2028 school year.

The fertility rate in the U.S. has been trending downward for decades and now a new report shows it ranks the lowest it has been in a century. According to data from the CDC's National Center for Health Statistics, between 2022 and 2023, there was a 3% drop in the number of births.

Buffalo Bills safety Damar Hamlin suffered a cardiac arrest in the middle of the game back in 2023. His cardiac arrest on the NFL field is helping a national TV audience see the importance of access to the defibrillators and knowing how to perform CPR.

Reggie Bush is getting his Heisman trophy back. The Super Bowl Champion and star running back for USC was stripped of college football's highest honor in 2010. But now, student-athletes can receive compensation for their name, image and likeness.

Dallas artist Roberto Marquez traveled to the Rafah Crossing in Egypt, the U.S. capital and will attend this weekend's statewide protest in Austin.

On Friday, hundreds of thousands of fans gathered outside and all around Globe Life Field in Arlington to celebrate the Texas Rangers historical World Series win!

Babies in the neonatal intensive care unit at several Texas Health hospitals were dressed in creative costumes for Halloween.

Is that the smell of cotton candy, beignets and brisket wafting over Fair Park? It sure is, and we are here for it!

No one puts these dolls back in their boxes. Babies in the neonatal intensive care unit at Texas Health Harris Methodist Hospital Southwest Fort Worth are pretty in pink!